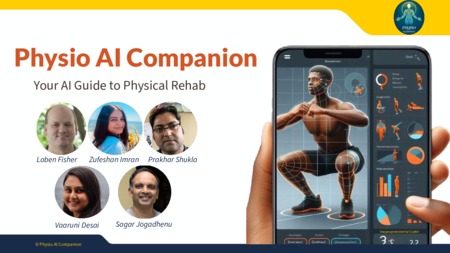

Physio AI Companion

Scripts

| File Size |

|

| File Format |

|

| Scope And Content |

The git repo contains all the steps needed to replicate the project work. It has steps to create a virtual env with detailed requirements.txt file. The user can then use the Jupyter Notebooks run the sample data provided to generate the sample output, which is also provided. User can also use the app.py in the app dir to host the model & expose end point. User can launch a visualization app which enables the user to create more videos, visualize the processed data from the sample data as well as new data. |

| Technical Details | See readme and requirements.txt files in GitHub repository for details. Python dependencies are also provided in the requirements.txt file within the Scripts component file. |

Input file

| File Size |

|

| File Format |

|

| Scope And Content | The input data consists of video file(s) of a person performing an overhead squat. The location of where the data needs to be installed depends on what deployment environment is being used (ex: a local computer or AWS). The instructions on where to put the data is in the source file. |

- Collection

- Cite This Work

-

Desai, Vaaruni; Fisher, Laben F.; Imran, Zufeshan; Jogadhenu, Sagar R.; Shukla, Prakhar; Ochoa, Benjamin; Richardson, Brian; Duenas, Kevin; Franco, Malerie (2024). Physio AI Companion. In Data Science & Engineering Master of Advanced Study (DSE MAS) Capstone Projects. UC San Diego Library Digital Collections. https://doi.org/10.6075/J0HM58PG

- Description

-

Physical Therapists (PT) and Kinesiologists recommend a series of exercises but often face challenges in continuously monitoring individuals performing exercises to ensure correct postures and prevent injury aggravation. This research attempts to address this issue by building a product designed to automate the detection of the incorrect exercises and provide users with timely feedback. The research effort began with a set of curated exercise videos, a set of biomechanical standards as well as developing a core model to analyze a single exercise - overhead squat. The core model approach consists of three main steps: preprocessing to standardize the videos, creating a 3D body model and calculating incorrectness scores for each repetition and aggregation based on measured joint angles. The work uses state-of-the-art computer vision models and computational algorithms for a customized solution. The results from the core model are used to provide feedback to both practitioners and users through visual overlays on the exercise video and graphical presentation of biomechanical measures captured during the exercise.

- Date Collected

- 2024-01-06 to 2024-06-07

- Date Issued

- 2024

- Advisors

- Contributors

- Note

-

This project relies on external software packages, modules/libraries, or programs, use of which may carry specific license requirements. Users should comply with any licenses specified within the contents of this project.

- Series

- Topics

Formats

View formats within this collection

- Language

- English

- License

-

Creative Commons Attribution-NonCommercial 4.0 International Public License

- Rights Holder

- Desai, Vaaruni; Fisher, Laben F.; Imran, Zufeshan; Jogadhenu, Sagar R.; Shukla, Prakhar

- Copyright

-

Under copyright (US)

Use: This work is available from the UC San Diego Library. This digital copy of the work is intended to support research, teaching, and private study.

Constraint(s) on Use: This work is protected by the U.S. Copyright Law (Title 17, U.S.C.). Use of this work beyond that allowed by "fair use" or any license applied to this work requires written permission of the copyright holder(s). Responsibility for obtaining permissions and any use and distribution of this work rests exclusively with the user and not the UC San Diego Library. Inquiries can be made to the UC San Diego Library program having custody of the work.

- Digital Object Made Available By

-

Research Data Curation Program, UC San Diego, La Jolla, 92093-0175 (https://lib.ucsd.edu/rdcp)

- Last Modified

2024-07-10

Library Digital Collections

Library Digital Collections